Rating MCP Server Quality: How We Score Tools, Skills & Annotations

The average MCP tool description is bad. Two recent arXiv papers measured exactly how bad. 97.1% of analyzed tools carry at least one description smell. 73% of servers reuse the same display_name across multiple tools. In head-to-head selection between five functionally equivalent servers, the one with a clearer description gets picked 72% of the time vs a 20% baseline.

A 260% selection lift from prose alone is the headline. Descriptions aren't documentation; they're part of the agent-facing prompt, and a bad one quietly costs you every selection round your server is in. So we built a scorer.

MCPBundles now runs an LLM-as-judge rubric over every published server, covering tool descriptions, server-level skill content, and the structured MCP annotations that the runtime actually reads. The verdict shows up on the public listing page.

The Research We Built On

Two papers, both submitted to arXiv in February 2026, set the foundation.

Paper 1, "MCP Tool Descriptions Are Smelly!" (arXiv 2602.14878, Hasan, Li, Rajbahadur, Adams, Hassan from SAIL Research). An empirical study of 856 tools across 103 MCP servers, scored on a six-component rubric: Purpose, Usage Guidelines, Limitations, Parameter Explanation, Examples vs Description, and Length. 97.1% of tools have at least one smell, and 56% fail to state their purpose clearly. Augmenting descriptions improved task success rate by a median of 5.85 percentage points but inflated execution steps by 67.5%, so longer is not automatically better. Per-component ICC reliability sits between 0.62 and 0.90 across three jury models, with five of six dimensions in the "good" reliability band.

Paper 2, "From Docs to Descriptions" (arXiv 2602.18914, Wang, Li, Sun, Liu, Liu, Tian from UCLA and NTU). A larger study of 10,831 servers and 18 smell categories across four quality dimensions: accuracy, functionality, information completeness, conciseness. The headline finding is that functionality matters most for tool selection (+11.6%, p < 0.001), followed by accuracy (+8.8%). When five functionally equivalent servers compete, the standard-compliant one wins 72% of the time vs the uniform 20% baseline. By query complexity, the lift is 78% on minimal queries and still 68% on complete ones.

Both papers converge on the same operational point. Tool and server descriptions steer selection, argument filling, and the decision not to call a tool at all. They behave like prompt, and the failure mode is silent: an agent picks the wrong tool or no tool, and you never see why.

What The Rubric Scores

The scorer is LLM-as-judge with a single model (Claude Haiku 4.5) and structured-JSON output. It runs against the catalog the user actually sees, meaning the live server's tool list and the bundle's skill content. Scoring happens in two passes, one per tool and one for the server-level skill.

Pass 1: Per-tool scoring

Each tool gets graded on four dimensions, 1-5 each:

| # | Dimension | What it asks |

|---|---|---|

| 1 | Purpose Clarity | Can the AI tell what this tool does (action, target data, return shape) from the description alone? |

| 2 | Parameter Quality | Does every parameter have a description that explains meaning and behavioral effect, in the schema or in the prose? |

| 3 | Disambiguation | Can the AI choose this tool over similar siblings on the same server? |

| 4 | Density | Is every clause doing useful work, or is it filler and restating the tool name? |

A tool is labeled Bad if any single dimension drops below 3, Good otherwise.

This is a tightened adaptation of Paper 1's six-component framework. We collapsed Usage Guidelines and Limitations into Disambiguation and Purpose, and dropped Examples Balance and Length as standalone dimensions. Paper 1's own ablations show that Examples is the least reliable signal (ICC 0.62, lowest of the six) and that Length correlates closely with the other four when measured directly. The four-dimension version is shorter, faster, and scores the same underlying property: can the agent use this tool correctly without reading the source.

Pass 2: Server skill scoring

The bundle's SKILL_CONTENT, the server-level guide that explains how the tools fit together, gets a separate rubric on six dimensions, 1-5 each:

- Domain Context — does the skill explain what domain the server covers, what data sources, what scope?

- Workflow Guidance — does it describe how tools chain together for real workflows?

- Tool Disambiguation — does it help the AI choose between similar tools?

- Operational Knowledge — does it convey domain-specific gotchas, thresholds, interpretation guidance?

- Additive Value — does it add information that individual tool descriptions don't already contain?

- Conciseness — is the skill focused, or padded with marketing and noise?

A server with one tool has no workflow surface and nothing to disambiguate, so those two dimensions auto-score 5 (the per-tool rubric has the same "no siblings" rule). The other four apply to every bundle.

The skill scorer enforces one extra hard rule: a skill is automatically Bad if it instructs the user to obtain, paste, or configure credentials. MCPBundles owns credential capture per workspace, encrypts at rest, and injects server-side, so the model never sees credential values. A skill that says "Paste your API key in the Authorization header" is wrong on this platform regardless of how good the rest of it is.

Why MCP Annotations Matter

MCP defines a small set of structured annotations that servers can attach to each tool: readOnlyHint, destructiveHint, idempotentHint, openWorldHint. They are behavioral metadata that tell the host whether a tool reads or writes, whether it's safe to retry, and whether its effects are reversible. Hosts use them to gate consent prompts, batch reads, and route destructive calls through human review.

Paper 2 frames missing annotations as an information completeness smell. The tool has the property, the description sometimes implies it, but the structured field is empty. That's a safety gap as much as a documentation one, because agent frameworks rely on the structured field rather than parsing prose for "this is read-only."

The MCPBundles scorer treats annotations as a first-class quality input rather than as a separate sub-score. The full annotations dict is included in every tool payload sent to the judge, so the rubric scores the description and the annotations together. A tool with an honest description and a populated readOnlyHint: true will outscore the same description with an empty annotations block on Disambiguation and Density, because the structured hint is doing real disambiguation work that prose otherwise has to do twice.

Server-level smell detection runs a separate pass for repeated display_name values across tools, which was Paper 2's single biggest finding (73% of servers in their corpus). When detected, every offending tool slug surfaces as a priority-1 server-level suggestion in the actionable output. The MCP catalog generator persists annotations alongside tool_description, input_schema, and display_name, so the runtime sees them whether the score has run or not.

How The Verdict Surfaces

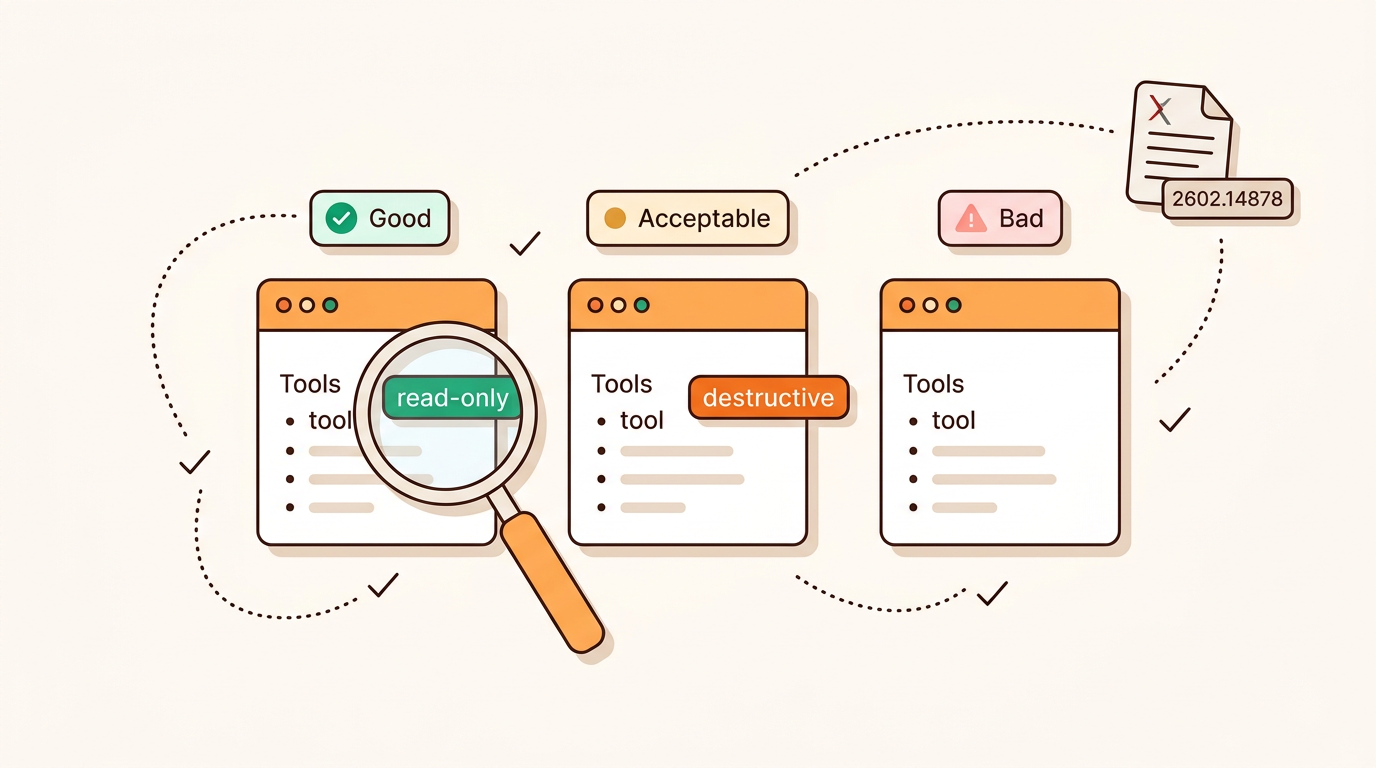

Every published server in the directory carries a qualityScore summary on its public /skills/<slug> page once it has been scored. The summary is read-only and shows four things: the bundle label (Good, Acceptable, or Bad) on the skill rubric, the bundle average (the six skill sub-scores collapsed to one 0-2 number for compact rendering), the per-tool counts split across Good, Acceptable, and Bad, and the timestamp of the most recent run.

The "Run scoring" affordance is never visible to anonymous visitors. It lives only on the workspace bundle page (/w/<workspace>/bundles/<slug>) and is gated to two roles: superusers, for platform-wide curation, and verified claimers, the maintainers who completed the well-known and 6-digit OTP claim flow on the listing. Self-publishing a server doesn't grant scoring rights; the publisher has to claim the listing first. That gap exists so a third party can't publish someone else's server URL and silently inherit the right to mutate its score.

When the maintainer triggers a re-score, the response carries an actionable_suggestions list synthesized from the rubric output, ordered into three priority bands:

- Server smells (priority 1) — repeated display names. The most reliable agent-misselection driver per Paper 2. Each entry lists the offending tool slugs.

- Bundle deficiencies (priority 2) — skill content missing or labeled Bad. Carries the rubric's reason text directly.

- Tool issues (priority 3) — tools labeled Bad, sorted by lowest average score first, so the worst offenders surface at the top. Each entry carries the tool slug and the reason.

The maintainer fixes the highest-priority items, hits re-score, and the new verdict replaces the old one in the directory. There is no historical score chart on the public page; the listed score is always the most recent run, because that's the artifact agents are about to use.

Design Decisions

A few choices depart from the papers.

Single judge instead of LLM-jury

Paper 1 uses a 3-model jury (gpt-4.1-mini, claude-haiku-3.5, qwen3-30b-a3b) for research rigor. We run a single Claude Haiku 4.5 pass. ICC reliability for the dimensions we kept sits in the "good" band (0.75-0.90) in Paper 1's results, so single-model variance on those dimensions is well below the smell threshold. A 3-model jury costs roughly 3x per score and adds latency that would push interactive re-scoring out of the maintainer flow. The published rubric ships with the structural N/A rules and tight prompt scaffolding to reduce single-judge drift on edge cases.

No scoring on credential-gated servers

If the canonical credential for a published listing is missing or empty, the score endpoint returns a zero-shape response with a discovery_status of no_credential or no_tools_discovered. We never pretend an unscored server scored zero, because that would mislead both maintainers and visitors. The publish flow makes this explicit: OAuth servers can be published without credentials, but the score won't render until the maintainer claims and binds one.

Score is metadata about the listing, not user data

The summary is public; anyone can read it. The detailed per-tool breakdown and the actionable suggestions stay behind the maintainer panel because they are an editing surface, not a discovery surface. The split mirrors how the papers treat description quality: the verdict is a public property of the server, the diagnostic detail is for the author.

Annotations folded into existing dimensions

A separate "annotation completeness" sub-score would be easy to add but would over-weight what's structurally a small fraction of an MCP tool definition. Folding annotations into Disambiguation and Density keeps the rubric at four dimensions and reflects how agents actually read a tool: as a single payload of prose, schema, and annotations.

What This Means For Server Authors

If you publish an MCP server to the MCPBundles directory, the rubric runs against it. Three things move the score the most.

The first is giving every tool a clear, distinct purpose statement. Paper 2's biggest finding is that 73% of servers reuse display_name; Paper 1's most common smell is unclear_purpose at 56%. Same problem from two angles. Each tool needs a name and one sentence that says what it does that no other tool on the server does.

The second is populating annotations. readOnlyHint, destructiveHint, and idempotentHint are cheap structured fields the runtime actually reads, and skipping them shifts safety judgement onto the host where it's harder to enforce.

The third is writing skill content that isn't a tool index. The skill rubric penalizes pure duplication of tool descriptions, so the skill earns its top score by adding domain context, workflow guidance, and operational knowledge: the parts an agent can't reconstruct from individual tool definitions on its own.

Re-scoring is interactive: read the actionable suggestions, fix the highest-priority items, re-run. The Publishing & Claiming MCP Servers docs walk through both flows end to end.

References

- Hasan, M. M., Li, H., Rajbahadur, G. K., Adams, B., & Hassan, A. E. (2026). Model Context Protocol (MCP) Tool Descriptions Are Smelly! Towards Improving AI Agent Efficiency with Augmented MCP Tool Descriptions. arXiv preprint 2602.14878.

- Wang, P., Li, Y., Sun, Y., Liu, C., Liu, Y., & Tian, Y. (2026). From Docs to Descriptions: Smell-Aware Evaluation of MCP Server Descriptions. arXiv preprint 2602.18914.

- Model Context Protocol — Tool Annotations specification.